Update: more recent information can be found here

If you remember the old windows music player Winamp, it came with an amazing visualizer named Milkdrop written by a guy at nVidia named Geiss. This plugin performed beat detection and splitting the music into frequency buckets with an FFT and then fed that info into a randomly-selected “preset.” The presets are equations and parameters controlling waveform equations, colors, shapes, shaders,”per-pixel” equations (not actually per-screen-pixel, rather a smaller mesh that is interpolated) and more.

Most of the preset files have ridiculous names like:

- “suksma + aderassi geiss – the sick assumptions you make about my car [shifter’s esc shader] nz+.milk”

- “lit claw (explorers grid) – i don’t have either a belfry or bats bitch.milk”

- “Eo.S. + Phat – chasers 12 sentinel Daemon – mash0000 – multi-band time-distortion aurora granules.milk”

- “Goody + martin – crystal palace – Schizotoxin – The Wild Iris Bloom – mess2 nz+ i have no character and feel entitled to one.milk”

Milkdrop was originally only for windows and was not open-source, so a few very smart folks got together and re-implemented Milkdrop in C++ under the LGPL license. The project created plugins to visualize Winamp, XMMS, iTunes, Jack, Pulseaudio, ALSA audio. Pretty awesome stuff.

This was a while ago, but recently I wanted to try it out on OSX. I quickly realized that the original iTunes plugin code was out of date by about 10 major versions and wasn’t even remotely interested in compiling, not to mention lacking a bunch of dependencies built for OSX.

So I went ahead and updated the iTunes plugin code, mostly in a zany language called Objective-C++ which combines C++ and Objective-C. It’s a little messed up but I guess it works for this particular case. I grabbed the dependencies and built them by hand, including static versions for OSX in the repository to make it much easier for others to build it (and myself).

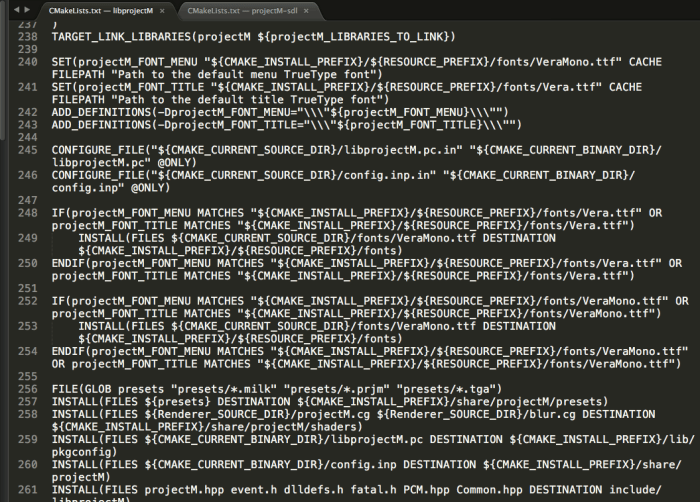

Getting it to build was no small feat either. Someone made the unfortunate decision to use cmake instead of autotools. I can understand the hope and desire to use something better than autotools, but cmake ain’t it. Everything is written in some ungodly undocumented DSL that is unlike any other language you’ve used and it makes a giant mess all over your project folders like an un-housebroken puppy fed a laxative. I have great hope that the new Meson build system will be awesome and let us all put these miserable systems out to pasture. We’ll see.

Long story short after a bunch of wrangling I got this all building as a native OSX iTunes plugin. With a bit of tweaking and tossing in the nVidia Cg library I got the quality and rendering speed to be top-notch and was able to reduce the latency between the audio and rendering, although I think there’s still a few frames of delay I’d like to figure out how to reduce.

I wanted to share my plugin with Mac users, so I tried putting it in the Mac App Store. What resulted was a big fat rejection from Apple because I guess they don’t want to release plugins via the app store. You can read about those travails here. I think that unpleasant experience is what got me to start this blog so I could publicly announce my extreme displeasure with Apple’s policies towards developers trying to contribute to their ecosystem.

After trying and failing to release via the app store I put the plugin up on my GitHub, along with a bunch of the improvements I made. I forked the SourceForge version, because SourceForge can go wither and die for all I care.

I ended up trying to get it running in a web page with Emscripten and on an embedded linux device (raspberry pi). Both of these efforts required getting it to compile with the embedded spec for OpenGL, GLES. Mostly I accomplished this by #ifdef’ing out immediate-mode GL calls like glRect(). After a lot more ferocious battling with cmake I got it running in SDL2 on Linux on a Raspberry Pi. Except it goes about 1/5fps, lol. Need to spend some time profiling to see if that can be sped up.

I also contacted a couple of the previous developers and the maintainers on SourceForge. They were helpful and gave me commit access to SF, one said he was hoarding his GLES modifications for the iOS and Android versions. Fair enough I guess.

Now we’re going to try fully getting rid of the crufty old SourceForge repo, moving everything to GitHub. We got a snazzy new GitHub homepage and even our first pull request!

My future dreams for this project would be to make an embedded Linux device that has an audio input jack and outputs visualizations via HDMI, possibly a raspberry pi, maybe something beefier. Apparently some crazy mad genius implemented this mostly in a FPGA but has stopped producing the boards, I don’t know if I’m hardcore enough to go that route. Probably not.

In conclusion it’s been nice to be able to take a nifty library and update it, improve it, put out a release that people can use and enjoy, and work with other contributors to make software for making pretty animations out of music. Hopefully with our fresh new homepage and an official GitHub repo we will start getting more contributors.

I recorded a crappy demo video. The actual visualizer is going 60fps and looks very smooth, but the desktop video recorder I used failed to capture at this rate so it looks really jumpy. It’s not actually like that.

Any chance this project might actually make it onto the Raspberry Pi? You can add an AUX input via USB for under $5 https://www.adafruit.com/product/1475 and then output the visualization over HDMI… I actually want to use it in my car so a lower resolution for better frame rate would be fine by me. I’d just like to see something other than “AUX Device Ready” in my dash all the time.

LikeLike

I’d love to but I just haven’t had time to do the work and I don’t really know enough about GLES/VBOs. Feel free! It’s open source!

LikeLike